Healthcare is rapidly adopting Private AI to enhance operational workflows, patient outcomes, and efficiency. From clinical documentation to patient engagement, AI is transforming how care is delivered. But this change also entails a crucial duty: safeguarding private patient information. Healthcare data breaches remain among the most costly, according to industry studies, making compliance with laws …

Today, financial institutions are under increasing pressure to improve compliance while cutting expenses. Stricter laws and an increase in financial crime have caused global compliance expenses to rise by more than 60% over the past ten years, according to industry reports. Due to their heavy reliance on human procedures and rule-based engines, traditional KYC and …

Businesses are using smaller, more specialised models that are tailored to certain workflows rather than depending just on large general-purpose models. These models provide stricter governance controls, predictable infrastructure costs, and quicker responses. Consequently, the deployment of small language models is becoming a fundamental element of contemporary industrial AI architecture. Enterprise SLM deployment methods that …

Over the past two years, enterprise AI usage has increased dramatically. However, many businesses are finding that implementing large language models in production presents a major operational challenge: cost. Large models have tremendous capabilities, but the main obstacle to long-term AI adoption is frequently the continuous costs of operating them at scale. LLM inference cost …

Across regulated and data-sensitive industries, enterprises are moving away from oversized, general-purpose AI models and toward compact, controllable alternatives. The shift isn’t just about performance. It’s about ownership, compliance, and cost. That’s why many teams now fine tune small language model architectures instead of deploying massive public LLMs. Small Language Models (SLMs) provide what enterprise …

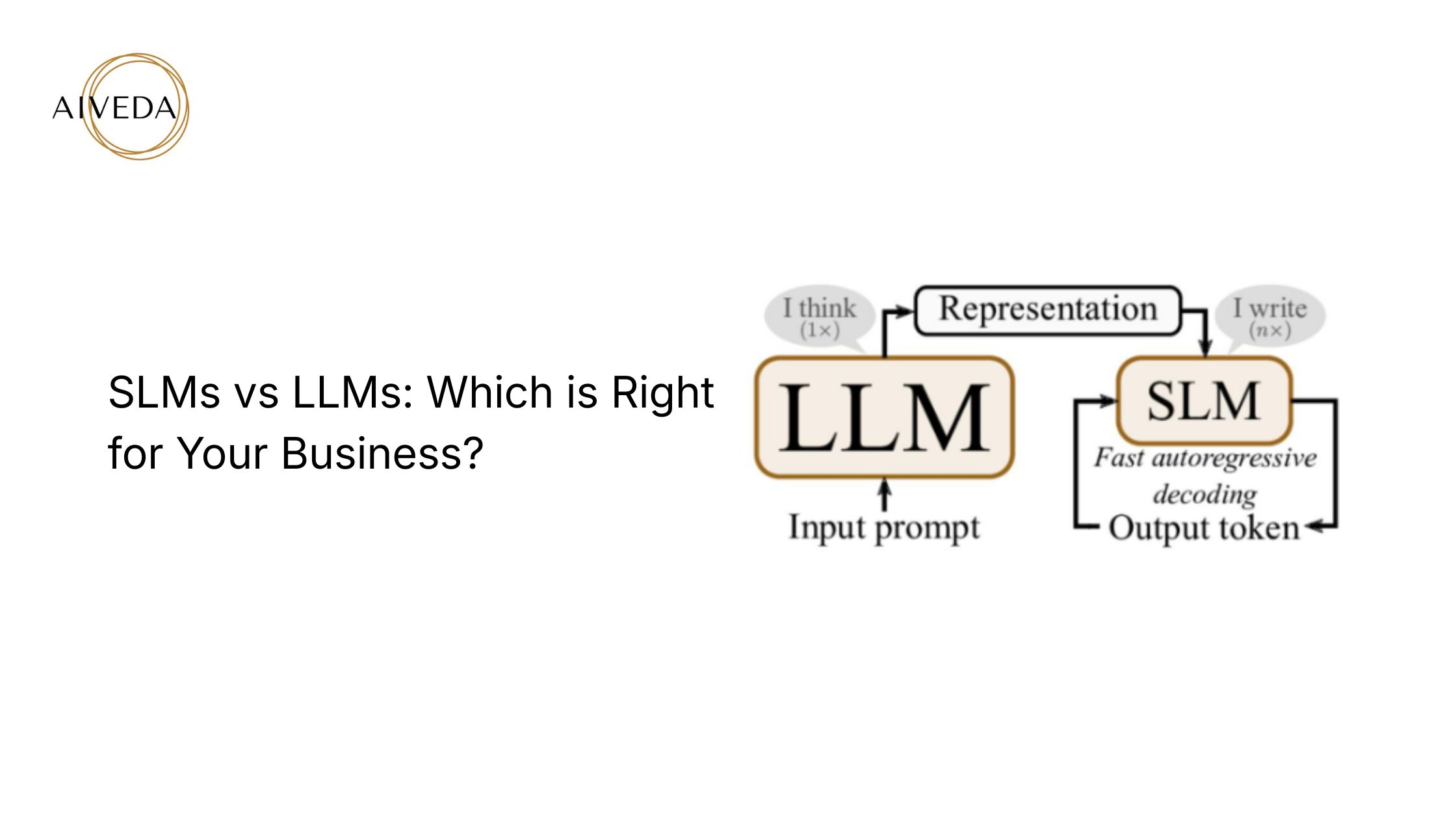

Artificial intelligence is no longer considered experimental in business. From customer service automation to internal knowledge assistants and predictive analytics, AI is becoming increasingly integrated into day-to-day operations. However, many business owners face a key decision that immediately affects budget, speed, and security: SLM vs LLM. While large language models make headlines for their remarkable …

Businesses are now expecting quantifiable commercial results rather than being dazzled by AI demonstrations. Large language models (LLMs) have been adopted by numerous organisations throughout the last two years, with the belief that more always equates to better. This assumption actually didn’t hold up on a large scale. As soon as LLMs were used in …

AI and generative technologies are being quickly adopted by businesses to enhance productivity, decision-making, and customer satisfaction. Nonetheless, a lot of leaders believe that large language models (LLM) inevitably produce greater results. Rising inference costs, significant infrastructure requirements, and growing worries about data privacy and compliance are all consequences of this misperception. Performance in real-world …

In 2024, JPMorgan Chase developed an internal generative AI platform called DocLLM to summarise legal documents securely within its private infrastructure. The reason was clear: traditional cloud-hosted models risked exposing confidential client data. Instead of deploying massive, general-purpose models, the bank built smaller, fine-tuned ones tailored for compliance and cost efficiency. This example highlights a …

Artificial Intelligence (AI) has entered a new era where large language models (LLMs) power everything from chatbots and copilots to knowledge retrieval and compliance automation. These massive models, such as GPT-4 or Gemini, have demonstrated groundbreaking capabilities. But their size also creates challenges: they require enormous compute resources, high costs, and specialized infrastructure that most …